- #Cave technology grid mapping virtual reality plus

- #Cave technology grid mapping virtual reality simulator

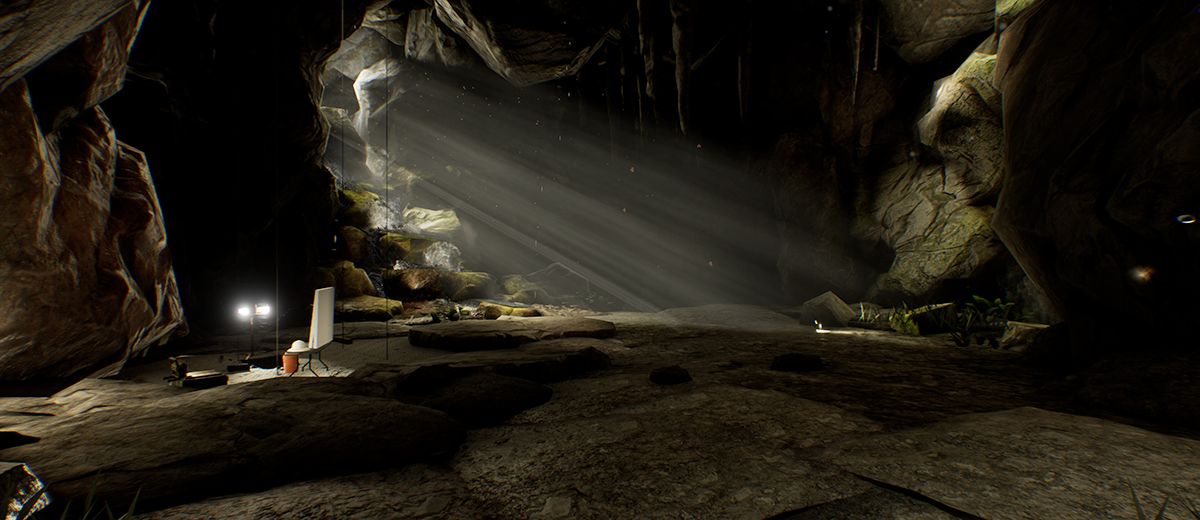

After moving from the textual computer to the flatland monitor in the previous decade, the 90’s has seen the emergence of Virtual Reality as the next technological frontier. Then we can create a mapping, which transforms a viewport’s image to be displayed by a projector.4, Place Jussieu, 75005 PARIS, Reality (VR) is currently coming out of the research labs to reach mainstream audience. In other words, if we can project a virtual screen on the real screen and they match, we know which projector pixel is affected by every view port’s virtual camera image. This is done by matching a virtual screen model on the actual screen (augmented reality approach). The first step is to match the screen with the simulated environment. Our projection screen can be seen as a window to a virtual world. They are defined with an eye position, view direction, rotation, and fields of view related to the virtual world. Virtual cameras are view ports to virtual-reality environments. Now we can sample in that image and project the image back to the real surface. So once we’ve matched the model with the camera and the projectors, we can read this texture coordinate from the 3D surface. This method is similar to texturing 3D objects in virtual worlds.įor that we need a model of the screen with texture coordinates for every surface. How can I project something on a specified area on the screen? This technique is called “projection mapping” this is where an image or “texture” is warped to be projected on the screen surface. You could also adapt the projection using our virtual canvas warp grid (VC) to adapt slightly curved screens or move the viewer’s position by adding or removing keystone characteristics.īut what if that is not enough? Content spaces enable you to transform a mapping to fit all kind of special purposes.

This is the standard case for all flat-surface projections where the camera is positioned where the audience is or will be. Once the setup is done, all projectors are blended and there is a rectangular content image back projected through all projectors.

#Cave technology grid mapping virtual reality plus

In a basic projection setup, the content space is the camera image plus the warping grid. Content spaces are texture maps and view ports. There can be several content spaces in one setup. So basically all features known from Anyblend can be applied to a screen or every other surface without any cameras! Content SpacesĪ content space is the image to be projected. What if we already know where every projector pixel points? Of course we can take advantage of that and place a 3D model in the ray fields of the projectors and calculate warping and blending from that. What benefits arise from that? Well, if you know every projector pixel’s 3D coordinate in the real world, you can calculate viewports, warp and blend maps, and eye point dependent transformations. With a few extra parameters, this scan enables us to register the screen to the camera and the projectors to the actual screen. Like every camera-based projector calibration, the basis of all calculations is a camera scan, which registers every projector pixel to the camera. Use network camera(s) if you need a camera for multiple PCs. The camera used to calibrate a connected projector must be connected to that PC.Every projector is “seen” by one camera completely.To calibrate this kind of system, we use a given Ethernet connection to calibrate a cluster.

#Cave technology grid mapping virtual reality simulator

In most simulator or cave setups, there are several renderers with single or multiple outputs. Use one or more cameras to calibrate a projection system residing in several computers.

Main concepts of Anyblend VR&SIM Cluster calibration Projection mapping, augmented reality using textures projected on surfaces.Simulator setup on half dome, cylindrical panorama, or irregular screens.Master export- Collect all warp/blend maps on the master machine and copy them to the appropriate location on each client for VIOSO plugin enabled IG’s.Multi-camera calibration- Use fast re-calibration on screens where one camera can’t see it all at once.3D Mapping- Create sweet spot independent warp maps for head tracked CAVE™ solutions.Templated viewport export- Export a settings file that can be included to IG configuration for automatic viewport settings.Calculation of viewports according to screen and eye point- Create mappings for every IG channel to the screen.

Model-based warp- Create warp and blend from model and projector intrinsic parameters without a camera.Mapping conversions- Create texture maps from a camera scan and a screen model.